Andrea Ayer in Why IP Address Certificates Are Dangerous and Usually Unnecessary spiega perché i certificati per indirizzi IP sono poco sicuri. Per via della rapida intercambiabilità degli IP in ambienti cloud e delle regole di validazione della proprietà dell'indirizzo molto allentate, è relativamente facile per un attaccante disporre di un certificato valido per un indirizzo IP che non è più autorizzato a rappresentare.

The basic security property provided by a certificate is that the certificate authority has validated that the certificate subscriber (the person who applies for the certificate and knows its private key) is authorized to represent the domain name or IP address in the certificate. This ensures that the other end of a TLS connection is truly the domain or IP address that you want to connect to, not a MitM impostor.

But the validation is not done every time a TLS connection is established; rather, it was done at some point in the past. Thus, the certificate subscriber may no longer be authorized to represent the domain or IP address.

How old might the validation be? As of February 2026, certificate authorities are allowed to issue certificates that are valid for up to 398 days. So the validation may be 398 days old. But it gets worse. When issuing a certificate, CAs are allowed to rely on a validation that was done up to 398 days prior to issuance. So when you establish a TLS connection, you may be relying on a validation that was performed a whopping 796 days ago. You could be talking not to the current assignee of the domain or IP address, but to anyone who was assigned the domain or IP address at any point in the last 2+ years.

È un problema che c'è evidentemente anche con i domini, ma lo spazio dei nomi di dominio è molto più grande di quello degli IPv4 e quindi il problema non è di fatto un problema:

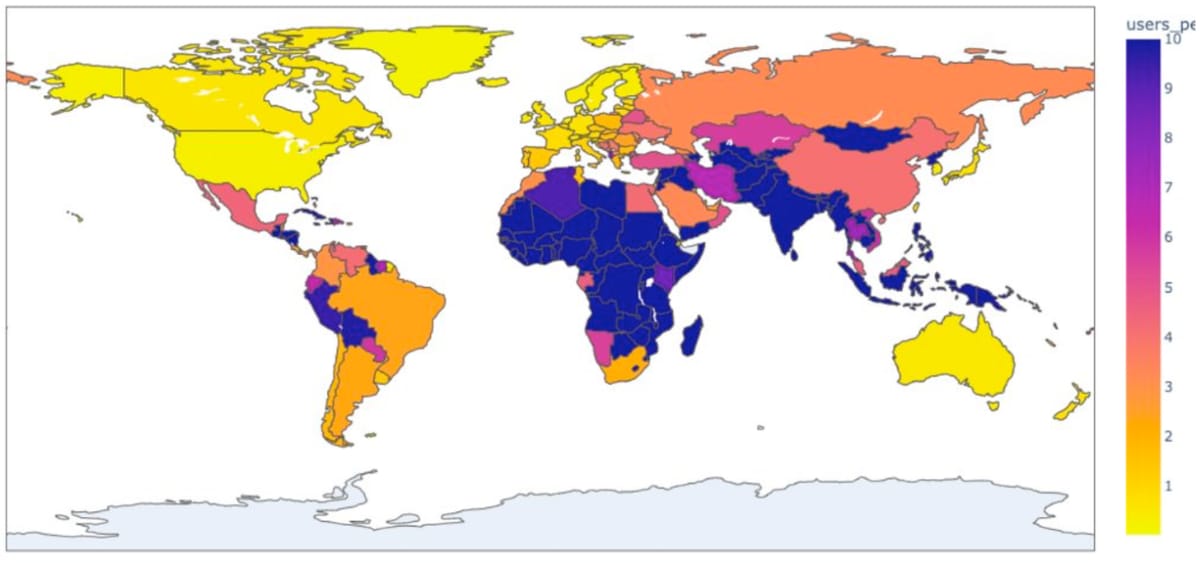

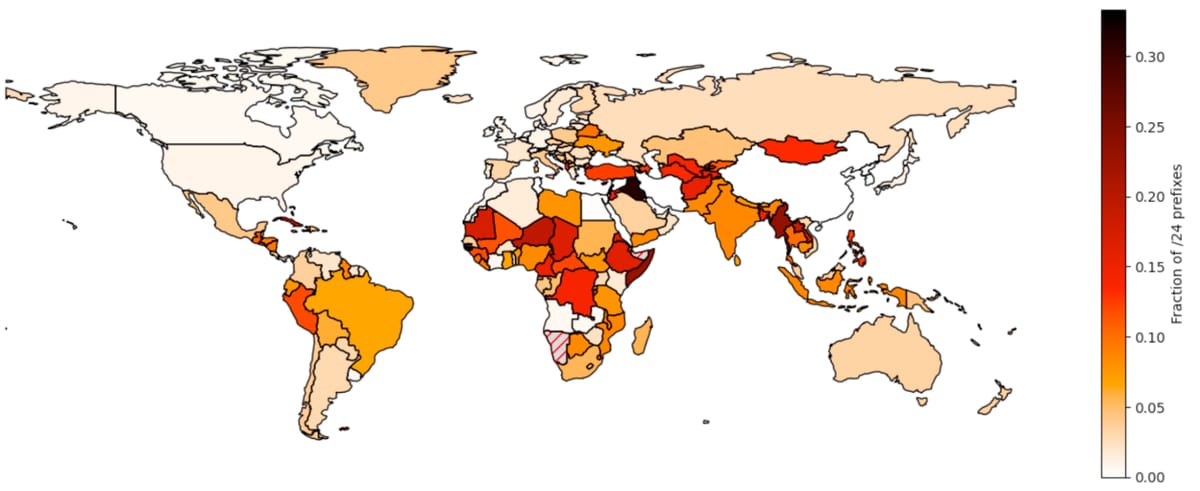

This is a problem with both domains and IP addresses, but it's way worse with IP addresses. While it's still very possible to register a domain that no one has ever registered before, you don't have this luxury with IPv4 addresses. There are no unassigned IPv4 addresses left; when you get an IPv4 address, it has already been assigned to someone else.

Questa vulnerabilità si ridurra assieme alla riduzione della durata massima dei certificati (47 giorni + 10 giorni di periodo di validazione nel 2029). Nel frattempo si può consultare o monitorare i log di trasparenza (es. crt.sh) per vedere quali certificati sono stati emessi per un indirizzo IP o un dominio.