Mega refactoring di GitHub Actions:

In early 2024, the GitHub Actions team faced a problem. The platform was running about 23 million jobs per day, but month-over-month growth made one thing clear: our existing architecture couldn’t reliably support our growth curve. In order to increase feature velocity, we first needed to improve reliability and modernize the legacy frameworks that supported GitHub Actions.

The solution? Re-architect the core backend services powering GitHub Actions jobs and runners.

Since August, all GitHub Actions jobs have run on our new architecture, which handles 71 million jobs per day (over 3x from where we started). Individual enterprises are able to start 7x more jobs per minute than our previous architecture could support.

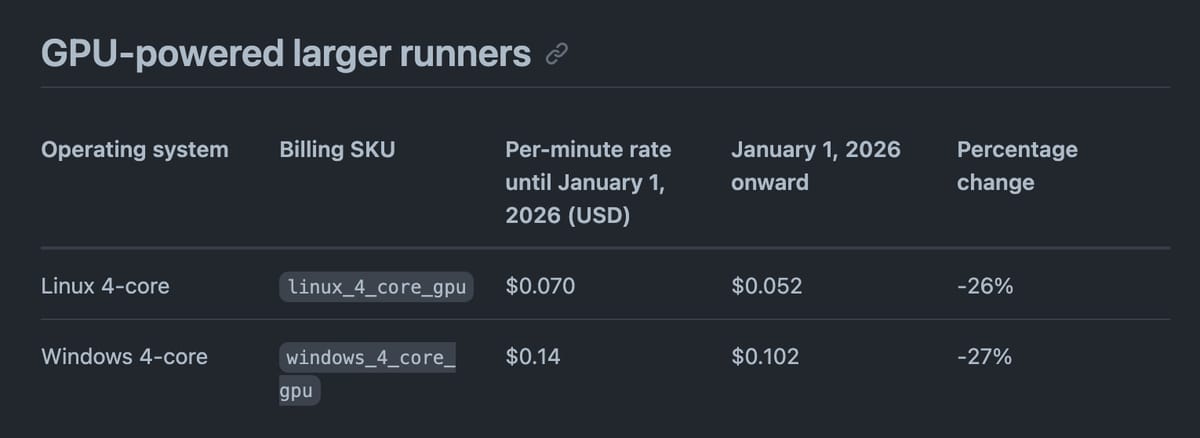

Nuovi prezzi più bassi:

Ma compare una fee di $0.002/min per i self-hosted runner.

I provider alternativi di hosted runner provano a spinnarla positivamente. Blacksmith.sh:

In the past, our customers have asked us how GitHub views third-party runners long-term. The platform fee largely answers that: GitHub now monetizes Actions usage regardless of where jobs run, aligning third-party runners like Blacksmith as ecosystem partners rather than workarounds.

Depot invece l'ha presa male.